by Sarah Wells

Following a nationwide call last summer for social equity and anti-racism, Princeton undergraduates returned to their classes in fall ready to transform their computer science skills into a force for social good.

From left to right: Khandaker Momataz (worked on the NJ Fairshare Housing group project), Anika Duffus and Sonia Gu (both worked on the TASK scheduler group project), Carina Lewandowski (member of the JuST student group), and Wendy Ho (attended Prof. Engelhardt's independent work seminar). Photo by Aaron Nathans.

Learning to design intuitive software or analyze a complex dataset are the bread and butter of any computer scientist. These skills can be used to build intelligent machines or predict financial patterns, but in two computer science courses this fall semester, students learned how these same skills can be used to promote social equity in their local communities.

In fall courses led by computer science professors Barbara Engelhardt and Bob Dondero, students explored unequal treatment of Black Americans hidden within datasets and used software to improve crucial work and housing systems in the Princeton area.

“Right now, so many people fail to understand how generalizable the racism that Black Americans experience is,” says Engelhardt. “They hear a few stories and assume that not all Black Americans are stopped by police and that the person telling the story had a bad experience with a bad apple cop. The data show that these experiences are ubiquitous… [and] tell the story of racism by design.”

For Wendy Ho '21, a computer science major who took Professor Engelhardt’s independent work seminar “Machine Learning for Social Justice: Data Analysis to Study the Black Lives Matter Movement,” the power of data analysis lies in its ability to tell a “clearer picture” of inequality that might otherwise be ignored.

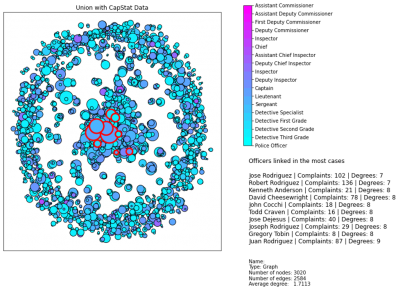

For her project, Ho analyzed 30 years of complaints issued against the New York Police department and converted it into a network graph that made it easier to see trends of misconduct between police officers and Black Americans.

“Historically, the data on citizen complaints of the police has been hidden from view of the public, making it hard to find trends and identify police that have multiple misconduct complaints,” explains Ho. “By looking at this dataset and by demanding that more data like this be public and transparent, we can understand how policing could better serve the public.”

But not all data, or datasets, are created equal. Omissions or misrepresented data can introduce harmful bias into already unequal systems. Some facial recognition systems, for example, have been shown to be less accurate in identifying non-white faces, resulting in Black individuals being misidentified and accused of crimes they did not commit.

“Data can be used to do harm when we forget that data only tells a partial picture,” says Ho. “The dataset itself matters as much as what the data might tell.”

For example, if a machine learning algorithm were only ever trained on pictures of dogs, it would have trouble recognizing a cat. But instead of this proving that cats don’t exist, it simply highlights a dangerous bias in the dataset itself. The same can be said for datasets that misrepresent Black, indigenous, and other people of color.

With this delicate balance in mind, Ho believes that data analysis has the biggest potential for good when used to analyze under-represented areas, such as using a predictive algorithm to study the police instead of citizens.

“Data can be used for good when we take care to increase transparency and trust in our institutions,” says Ho.

In Professor Dondero’s “Advanced Programming Techniques” course, two groups of students spent their semester exploring how software design can be used to make nonprofits’ websites easier to use.

An important objective for the course, says Professor Dondero, is for “students to learn about software engineering in a rich context that they find rewarding.”

In particular, the two student groups focused on designing software for two local nonprofit groups: NJ Fairshare Housing Center and Trenton Area Soup Kitchen (TASK).

In both cases, the student programmers started their projects by working with the newly formed Princeton group, Technology for a Just Society (JuST), which focuses on providing an inclusive tech culture and forging connections between young Princeton technologists and nonprofits.

“JuST offers a community where students can explore how technology can deepen social inequity, as well as how it can be leveraged to advance social justice and address other major societal challenges,” says Amina Elgamal '22, a co-founder of JuST and computer science major.

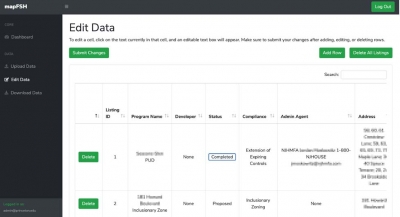

Veronica Abebe '22, a computer science major who worked on the NJ Fairshare Housing Center software, says that she and her group were immediately drawn to the nonprofit's mission of “end[ing] discriminatory or exclusionary housing patterns” and wanted to explore how their classroom coding skills could be used to further the cause.

The main problem at hand, explains another member of the group, Julia Ruskin '22, was that the nonprofit had no centralized system to display all their available units and no standard system for categorizing data such as addresses.

“We aimed to solve this problem by creating a website that displays all of their available listings on a map and lets users search for housing based on their specific preferences,” explains Ruskin. “Our site also allows the Fair Share Housing Center to add new units as they’re developed, ensuring that low-income communities across New Jersey are able to access the latest information about affordable housing opportunities.”

While there are a few things the team would have done differently in hindsight, such as optimizing their website design for mobile as well as desktop browsing, the group says that ultimately they were “really excited” by how well their design was received.

“We all believe as a team that this project has inspired us to use our classroom tools and techniques to help people and communities around us,” says group member Khandaker Momataz '22, speaking for the team. “Many times, we find ourselves very much so focused on the here and now in regards to college like our homework assignments, exams, and social activities. Nevertheless, we’ve all become inspired, in large part because of this project, to work on projects for others without external motivation.”

These are feelings that Sonia Gu '22 and Anika Duffus '22, an electrical and computer engineering and computer science major respectively, echoed in their experience designing software for TASK.

The idea that their project would have a real impact on TASK and its clients was daunting at first, Gu says, but she was “excited at the prospect of working on a project that could reach so many people, especially those outside of the Princeton bubble.”

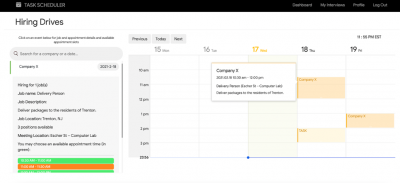

The main goal for this project was to design a website with two interfaces -- one for employers to post jobs and one for TASK clients to sign up for them. This functionality is similar to TASK’s in-person hiring drives. Additional functionality was also added, such as scheduling events, to make the software a more multi-purpose task scheduler.

Despite a total 15-hour time difference between Gu, Duffus, and their teammates, they were able to deliver a scheduling software that focused on providing easily accessible notifications to clients to improve appointment attendance.

Duffus says the project provided a source of fulfillment she never expected to have when finishing a programming project.

“I had great satisfaction fixing and improving code for hours, and it was amazing seeing how TASK appreciated our progress,” says Duffus. “I have always wanted to give back to my community using programming. However, being bogged down with school I never follow through because I think I need to do something big. I learnt that [giving back] can be something small and it can be actually fun.”

A group photo of the TASK team connecting through Zoom for their project. From top left: Anika Duffus, Sonia Gu, Andrew Paul, Pang Nganthavee, and Kwan Sirisakunngam. Image courtesy of the student group.

This taste for the true social benefits of coding and data analysis is something that Princeton’s Computer Science department chair, Jennifer Rexford, hopes to continue to instill through the department as well.

"As computer scientists, we recognize a responsibility to ensure that the technology we create is worthy of the trust society increasingly places in it,” says Rexford. “We can go beyond a “do no harm” view of ethical technology to a place where technology is a lever for righting wrongs and making the world a better place. These course projects, independent-work projects, and the JuST student group are all examples of the change we want to see in the world, and how we want our students to see themselves as the possible agents of that change."