Thomas A. Funkhouser, Nicolas Tsingos, Ingrid Carlbom, Gary Elko, Mohan Sondhi, and Jim West

The primary challenge in acoustic modeling is computation of reverberation paths from a sound's source position to a listener's receiving position. As sound may travel from source to receiver via a multitude of reflection, transmission, and diffraction paths, accurate simulation is extremely compute intensive. Prior approaches to acoustic simulation have used the image source method, whose computational complexity grows with O(n^r) (for n surfaces and r reflections), or ray tracing methods, which are prone to sampling error and require lots of computation to trace many rays. Due to the computational complexity of these methods, interactive acoustic simulation has generally been considered impractical.

We have developed data structures and algorithms to enable interactive simulation of acoustic effects in large 3D virtual environments. Our approach is to precompute and store a spatial data structure that can be later used during an interactive session for evaluation of reverberation paths. The data structure is a ``beam tree'' that maps the convex pyramidal beam-shaped paths of significant transmission and specular reflection from a source point through 3D space. The beam tree is generated by: 1) partitioning 3D space into convex polyhedral regions, 2) computing the convex polygonal boundaries between regions, and 3) recursively splitting and tracing convex polyhedral beams from a source point through region boundaries (e.g., reflecting beams at opaque boundaries). The precomputed beam tree data structure can be used to compute specular reflection, transmission, and diffraction paths from a source position to any point in space at interactive rates. The lengths and directions of computed reveration paths may be used to spatialize audio source signals to a receiver moving under interactive control by a user.

These data structures and algorithms have been integrated into a system for interactive acoustic modeling. The system takes as input: 1) a set of polygons describing the geometry and acoustic surface properties of the environment, and 2) a set of anechoic audio source signals at fixed locations. It outputs an audio signal auralized according to the computed delays, directions, and attenuations of the reverberation paths from each source to the receiver point. The receiver point can be moved interactively by the user, allowing real-time exploration of the acoustic environment.

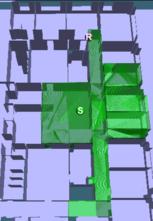

Visualization of priority-driven beam tracing.

The images show 4000 beams traced from a source (labeled 'S') to a

receiver ('R')

without (left image) and with priority ordering (right

image).

Visualization of bidirectional beam tracing.

The images show the beams traced to find the same reflection paths

(blue)

with unidirectional (above) and bidirectional (below) algorithms.

The unidirectional method requires 59,069 beams, while the bidirectional

method requires 39743.

Visualization of adaptive real-time beam tracing.

The circles around each source/receiver represent

the number of beams traced from that location to

achieve the best overall acoustical simulation.

This video shows how reflection paths from

a stationary source (white dot)

to a moving receiver (purple dot) can be updated at interactive rates.

The method is demonstrated for increasingly complex environments and

higher-order reflections.

As an example, 8-th order specular reflection paths can be updated

at interactive rates

in building environments with more than 10,000 polygons.

Click here for an AVI file with the video shown

at SIGGRAPH 99 (2:36 minutes, 73 MB download).

Our system produces audio at 22 KHz, 16 bit stereo, the AVI file has

11 KHz 8 bit stereo sound.