|

COS 429 - Computer Vision |

Fall 2016 |

| Course home | Outline and Lecture Notes | Assignments |

Sliding-window: Now that you have a face classifier trained, the next step is to run the classifier on a bunch of locations within an image. A "sliding window" detector does this by brute force: it considers every (36x36, in our case) window of pixels, computes the HoG descriptor on it, and runs it through the classifier. In practice, advancing the detection window by one pixel at a time is very slow and probably unnecessary. So, you can have a "stride" parameter, which is how much the window should be advanced each time. For example, for a stride of 3, you would consider windows starting at (1,1), (4,1), (7,1), ..., (1,4), (4,4), (7,4), etc.

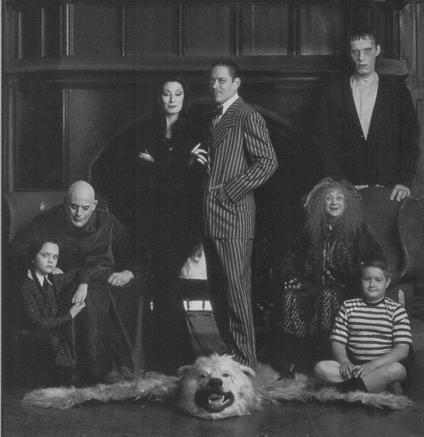

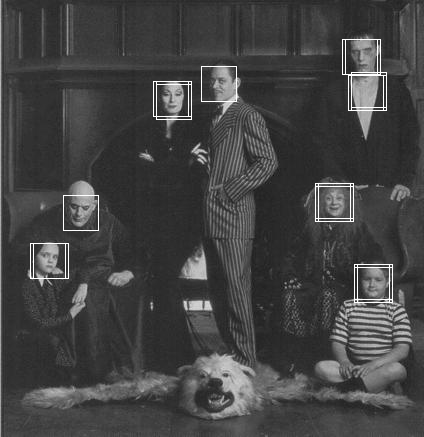

The output of this detector is a probability map similar to the one shown below.

|

|

Input image | Face probability map |

You can now do the usual sorts of postprocessing: simple thresholding, nonmaximum suppression, etc. Here are the faces detected by thresholding at a probability of 0.95 (with stride = 3):

Notice the multiple overlapping detections: nonmaximum suppression would be necessary to get rid of them. Also notice the false-positive detections on Lurch's shirt. The shirt shows up quite strongly in the probability map, and the training data contains a number of low-contrast faces, so it might be difficult to eliminate this false positive.

Do this:

First, in order to avoid re-training the classifier every time,

save the results of your face classifier after

you have run it for the final time, preferably with a large training set

size. A simple way of doing this is to add the following line to

test_face_classifier.m from part II:

save('face_classifier.mat', 'params', 'orientations', 'wrap180');

These variables can then be read back via load('face_classifier.mat');, as is done by the starter code below.

Next, download the starter code and dataset (about 600 kB) for this part. It contains the following files:

Once you download the code, copy over logistic_prob.m and hog36.m from Part II, as well as your saved face_classifier.mat.

Next, implement find_faces_single_scale, and test it on an image:

img = imread('face_data/single_scale_scenes/addams-family.jpg');

load('face_classifier.mat');

[outimg probmap] = find_faces_single_scale(img, 3, 0.95, params, orientations, wrap180);

imshow(outimg);

imshow(probmap);

Once you have a working detector, run test_single_scale to see outputs on a bunch of images.

Save and submit your face detection results for a few images showing particularly good or particularly bad performance. Discuss when your detector tends to fail.

Optional extra credit: