HyperBitBit: A Memory-Efficient Alternative to HyperLogLog. In preparation, 2021.

In memory of Philippe Flajolet. Combinatorics, Probability, and Computing, 2014.

Left-Leaning Red-Black Trees. Posted here, 2008.

CS-1 for Scientists (with G. Wilson, C. Alvarado, J. Campbell, and R. Landau). ACM SIGCSE Bulletin 40 (1), 2008.

Analytic combinatorics: symbolic combinatorics (with P. Flajolet). INRIA Research Report, December 2002.

Optimal-space generalized queues (with A. Brodnik, S. Carlsson, E. Demaine, and I. Munro). Workshop on Algorithms and Data Structures, 1999; summary in Dr. Dobbs Journal, July, 2002.

Analytic Combinatorics: Functional equations, rational and algebraic functions (with P. Flajolet). INRIA Research Report, January, 2001.

Ternary Search Trees (with J. Bentley). Dr. Dobbs Journal, March, 1998.

Multiway Quicksort (with J. Bentley). Dr. Dobbs Journal, November, 1998.

The Average-case Analysis of Algorithms: Multivariate Asymptotics and Limit Distribution (with P. Flajolet). INRIA Research Report, August, 1997.

Fast Algorithms for Sorting and Searching Strings (with J. Bentley). Proc. 8th Symposium on Discrete Algorithms, 1997.

The Average-case Analysis of Algorithms: Mellin Transform Asymptotics (with P. Flajolet). INRIA Research Report No. 2956, August, 1996.

Analysis of Shellsort and Related Algorithms. Invited paper at 1996 European Symposium on Algorithms.

Mellin Transforms and Asymptotics: Finite Differences and Rice’s Integrals (with P. Flajolet). Theoretical Computer Science A 144, 1995.

The Average-case Analysis of Algorithms: Saddle Point Asymptotics (with P. Flajolet). INRIA Research Report No. 2376, October, 1994

Queue-Mergesort (with M. Golin). Information Processing Letters 34, 1994.

The Average-case Analysis of Algorithms: Complex Asymptotics and Generating Functions (with P. Flajolet). INRIA Research Report No. 2026, September, 1993.

The Average-case Analysis of Algorithms: Counting and Generating Functions (with P. Flajolet). INRIA Research Report No. 1888, April, 1993.

The Analysis of Heapsort (with R. Schaffer). J. of Algorithms, 1993.

Deterministic Skip Lists (with I. Munro and T. Papadakis). Proc. 3rd Symposium on Discrete Algorithms, 1992.

More on Shellsort Increment Sequences (with M. Weiss). Information Processing Letters 34, 1990.

Tight Lower Bounds for Shellsort (with M. Weiss). J. of Algorithms 11, 1990.

Exact Analysis of Mergesort (with M. Golin). Proc. 4th SIAM Conference on Discrete Mathematics, 1988.

Shellsort and the Frobenius Problem (with M. Weiss, E. Hentschel, and A. Pelin). Congressus Numerantium 65, 1988.

Analysis of a Simple Yet Efficient Convex Hull Algorithm (with M. Golin). Proceedings 4th Symposium on Computational Geometry, 1988.

Bad Cases for Shakersort (with M. Weiss). Information Processing Letters 28, 1988.

Practical Variations on Shellsort (with J. Incerpi). Information Processing Letters 26, 1987.

Pairing Heaps: A New Form of Self-Adjusting Heap (with M. Fredman, D. Sleator, and R. E. Tarjan). Algorithmica 1, 1, 1986.

Digital Search Tree Analysis Revisited (with P. Flajolet). SIAM J. Computing 15, 2, 1986.

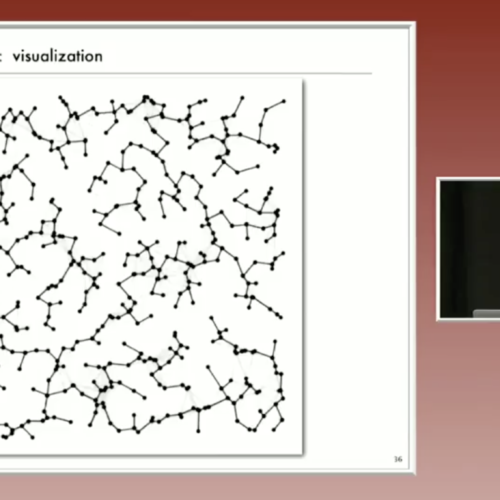

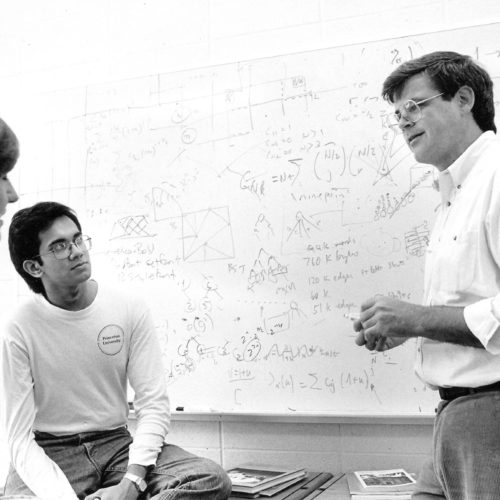

Techniques for Algorithm Animation (with M. Brown). IEEE Computer, 1985.

Shortest Paths in Euclidean Graphs (with J. Vitter). 25th Annual Symposium on Foundations of Computer Science, W. Palm Beach, FL (October 1984). Algorithmica 1, 1, 1986.

A New Upper Bound for Shellsort. Journal of Algorithms 7, 1986. (Invited paper at 1982 IEEE International Conference on Information Theory.)

Some Uses of the Mellin Integral Transform in the Analysis of Algorithms (with P. Flajolet and M. Regnier). in NATO Conference, Springer-Verlag, Berlin, 1985.

A System for Algorithm Animation (with M. Brown). Computer Graphics 18, 3, 1984.

A Prototype Environment for Algorithm Animation (with M. Brown). ACM SIGCSE Bulletin, 1984.

Improved Upper Bounds for Shellsort (with Janet Incerpi). 24th Annual Symposium on Foundations of Computer Science, 1983. (Invited paper in J. Computer and System Sciences 31, 2, 1985.)

VLSI Layout as Programming (with R. J. Lipton, J. Valdes, G. Vijayan, and S. C. North). ACM Transactions of Programming Languages and Systems 5, 3, 1983.

Mathematical Analysis of Combinatorial Algorithms. in Probability and Computer Science, G. Louchard and G. Latouche, ed., Academic Press, 1983.

ALI: A Procedural Approach to VLSI (with R.J. Lipton and J. Valdes). Proceedings Design Automation Conference, Las Vegas, NV, 1982.

Notes on Merging Networks (with Zhu Hong). Proceedings 14th Symposium on Theory of Computing, 1982. (Invited paper to Information and Control.)

Programming Aspects of VLSI (with R. J. Lipton and J. Valdes). Proceedings 9th Symposium on Principles of Programming Languages Albequerque, NM, 1982. (Invited paper for special issue of TOMS.)

The Complexity of Finding Cycles in Periodic Functions (with T. Szymanski and A. Yao). SIAM Journal of Computing, 1982.

Lower Bounds for VLSI (with R. J. Lipton). Proceedings 13th Symposium on Theory of Computing, Milwaukee, WI, 1981. (Invited paper for special issue of JCSS.)

Efficient Sorting by Computer. In Computational Probability, ed. P.M. Kahn, Academic Press, 1980.

A Dichromatic Framework for Balanced Trees (with L. Guibas). 19th Annual Symposium on Foundations of Computer Science, 1980. (Also appears in A Decade of Research—Xerox Palo Alto Research Center 1970-1980 ed. G. Laverdel and E.R. Barker.)

The Complexity of Finding Periods (with T. Szymanski). Proceedings 11th Symposium on Theory of Computing, Atlanta, GA, 1979.

Implementing Quicksort Programs. Communications of the ACM 21, 10, 1978.

Data Movement in Odd-Even Merging. SIAM Journal on Computing 7, 2, 1978.

Permutation Generation Methods. Computing Surveys 9, 2, 1977.

Quicksort with Equal Keys. SIAM Journal on Computing 6, 2, 1977.

The Analysis of Quicksort Programs. Acta Informatica 7, 1977.

Data Movement in Odd-Even Merging. Conference on Theoretical Computer Science, University of Waterloo, 1977.

Computer Graphics for Drafting. Computer Graphics and Image Processing 3, 1974.

Graphics Software for Two-Dimensional Filling. DECUS Proceedings, 1971.

SPY – A Program to Monitor OS/360. Proceedings 1970 AFIPS Fall Joint Computer Conference, AFIPS Press, 1970.